Google’s new AI image-generation model Nano Banana Pro is so realistic, it’s terrifying

Following in the footsteps of Grok, GPT Image and Nano Banana, Google has now dropped its newest AI (artificial intelligence) image-generation model, Nano Banana Pro (Gemini 3 Pro Image) — and honestly, the results are so realistic they’re more worrisome than wowsome.

Nano Banana Pro is billed as a “state-of-the-art image generation and editing model” that excels in visual design, world knowledge and text rendering. It opens an entirely new world for creatives and businesses to experiment with — if used responsibly. But the level of realism it produces is deeply alarming for the world we live in.

It brings up the same old question, now with even more urgency: how do we know what’s real anymore? In a world where people of all ages already fall for half-truths online, technology like Nano Banana Pro takes the authenticity crisis to a whole new level.

When OpenAI launched its “most advanced image generator yet,” built into GPT‑40 in March, our feeds were taken over by a flurry of Studio Ghibli-styled animations — raising serious copyright and ethical questions.

In recent years, AI, with its image-generative tool, has posed a threat not just to art and artists but also to the common man. From generating fake nudes to fake claims about anyone you want, the spread of AI misuse sees no bounds.

On November 8, an account called ‘PakVocals’ posted a video on X that claimed to show journalist Benazir Shah dancing in a nightclub, in an attempt to compromise the journalist’s credibility. In less than two weeks, the video garnered more than half a million views. The video was proven to be false.

AI learns from millions of images across the internet and memorises text associated with those images. In a process known as “diffusion”, AI starts by breaking images into pixels that do not represent any specific thing and then inverts the process so the model can revert to the original image. Artificial intelligence does not take into account copyright and hence artistic styles are used without permission.

With image-generative tools such as Midjourney, DALL-E, and even a feature on Canva, made widely available to anyone with an internet connection and a monthly subscription, users can write a prompt and generate an image in a certain artist’s style, without, of course, asking or crediting said artist.

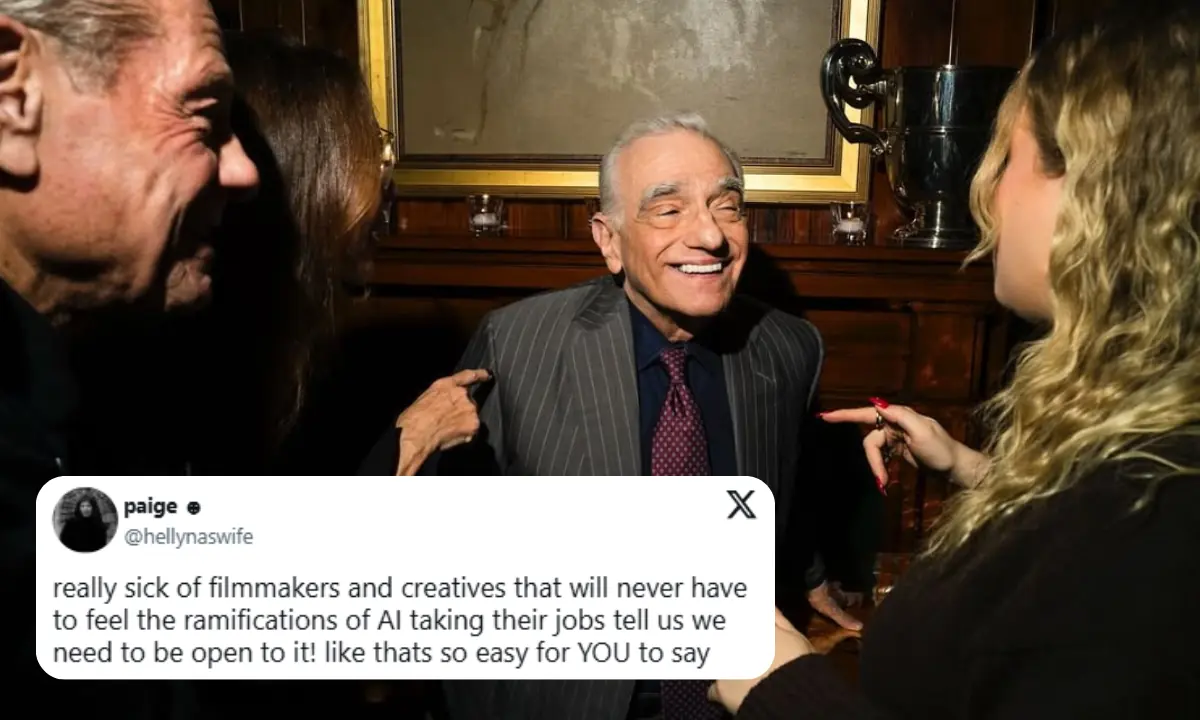

Even in the world of film, alarms are ringing. Jenna Ortega, while serving on the jury of the 22nd Marrakech International Film Festival alongside Parasite director Bong Joon Ho, warned that “it’s very easy to be terrified” by the “deep uncertainty” AI introduces into cinema.

“It feels like we’ve opened up Pandora’s box,” Buzzfeed quoted her saying, reflecting on AI’s rapid takeover. “There are certain things AI just isn’t able to replicate. There’s beauty in difficulty; beauty in mistakes. A computer can’t do that. A computer has no soul.

“It’s nothing we’ll really be able to resonate with. And I don’t want to assume for the audience, but I would hope it gets to a point where AI becomes a sort of mental junk food — and we feel sick, and we don’t know why. Sometimes, audiences need to be deprived of something in order to appreciate it again.”

In a world where we’re already seeing the strangest things — children’s skincare lines, AI-generated films, driverless cars, and tech breakthroughs that feel like sci-fi — who knows what’s coming next?

Cover photo via X (@NanoBanana)

Comments